2026: Privacy, AI, and the New Rules of Banking Trust

Master the shift from static compliance to dynamic trust. Discover how AI ready privacy architecture drives growth and resilience in 2026.

Master the shift from static compliance to dynamic trust. Discover how AI ready privacy architecture drives growth and resilience in 2026.

Subscribe now for best practices, research reports, and more.

In the landscape of 2026, the financial services sector has reached a definitive collision point. The widespread adoption of autonomous agents and generative models has promised a new era of efficiency, yet it has simultaneously exposed a profound vulnerability in the traditional trust model. For senior decision makers, the question is no longer whether to deploy AI, but how to do so without compromising the foundational privacy that underpins the bank to client relationship.

Trust in 2026 is no longer a static reputational asset; it is a dynamic technical requirement. As regulators across the globe enforce stricter mandates on data sovereignty and algorithmic transparency, the institutions thriving are those that have moved beyond "check the box" compliance. They are treating privacy not as a legal constraint, but as a core architectural feature that enables competitive differentiation.

For decades, banking privacy was managed through static consent and perimeter based security. This model assumes that data is a passive asset stored in well defined siloes. However, in the age of AI, data is active. It is used to train models, predict behaviours, and automate decisions in real time.

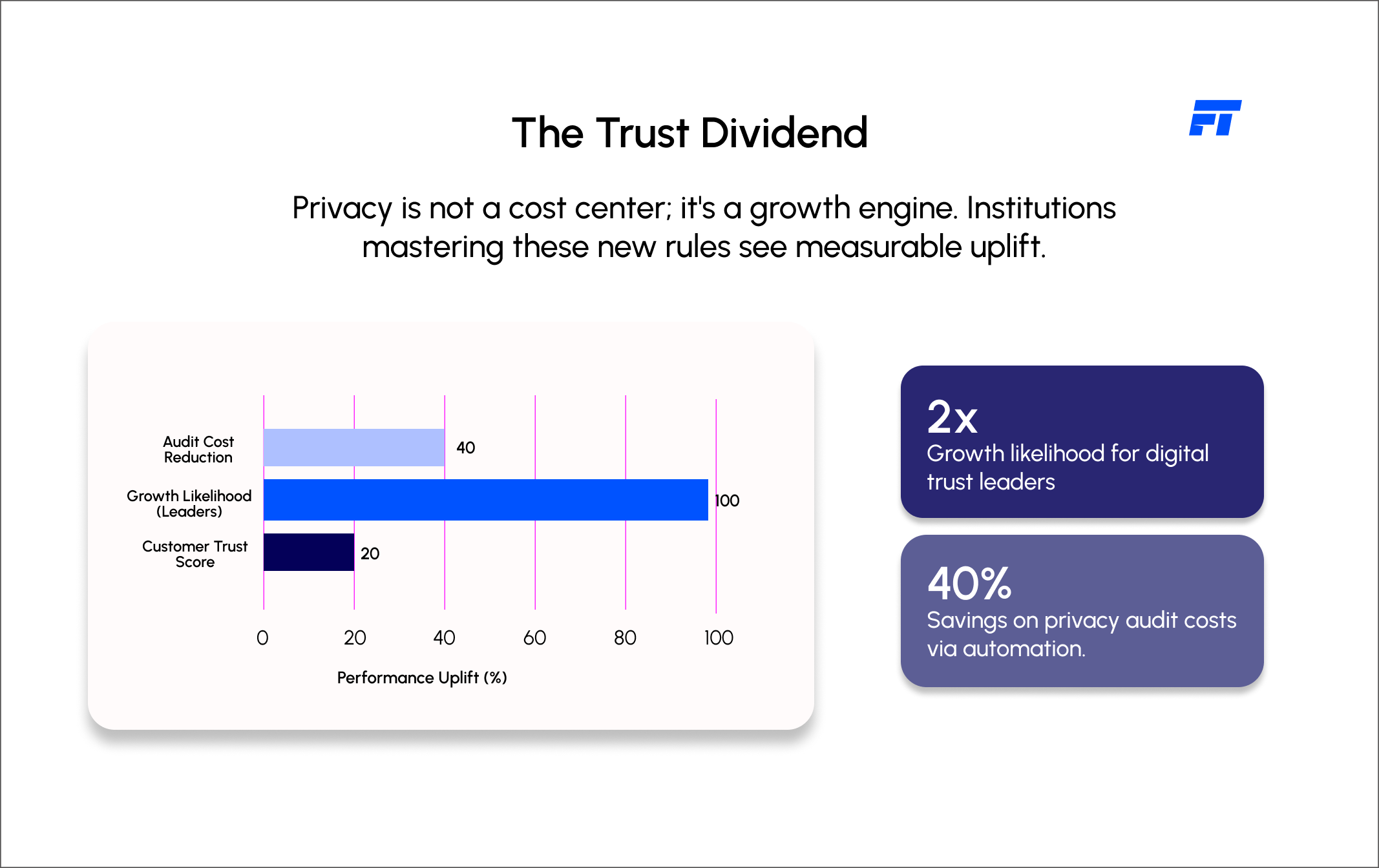

The traditional approach to privacy is fundamentally broken because it cannot handle the granular, constant flow of information required by modern AI. When a bank attempts to "bolt on" AI to a legacy core, it often creates a "black box" where data lineage is lost. This results in a dangerous tension: the more a bank uses its data to innovate, the more it risks a catastrophic breach of privacy or a regulatory fine. McKinsey reports that digital trust is now a primary driver of value, with leaders in this space twice as likely to see annualised growth of ten percent or more.

Leading institutions are responding by shifting their focus from protecting data at rest to protecting data in use. This requires a fundamental pivot toward a "compliant by design" architecture. At Fyscal Technologies, we advocate for a model where privacy and governance are baked into the modular layers of the bank, rather than being managed by external manual processes.

By hollowing out the legacy core and implementing a vendor agnostic orchestration layer, banks can maintain absolute control over how data is exposed to AI models. This approach ensures that the institution is not tethered to the proprietary privacy standards of a single AI vendor. It allows for the integration of emerging technologies that allow AI to learn from data without ever actually "seeing" the sensitive personal information of the client.

The first rule of trust in 2026 is that consent must be as dynamic as the AI itself. Static terms and conditions are no longer sufficient. Leading banks are implementing granular consent engines that allow customers to control exactly how their data is used for specific AI driven outcomes.

This level of transparency is essential for building long term loyalty. Consumers are significantly more likely to share data with institutions that provide clear, actionable control over their digital footprint.

The technical frontier of 2026 is defined by the adoption of Privacy Enhancing Technologies. These tools allow banks to extract the value of data without the risk of exposure.

Leading firms are utilising synthetic data to train their models, creating high fidelity digital twins of their customer base that contain no actual personal information. For complex cross border transactions or collaborative fraud detection, technologies such as homomorphic encryption and federated learning are becoming standard. These methods allow models to be trained on encrypted data sets across different jurisdictions without the data ever leaving its original silo. The use of PETs will be a baseline requirement for seventy percent of enterprise AI projects by the end of this year.

The final rule of trust is the maintenance of institutional autonomy. Relying on an external AI provider for privacy oversight is a strategic failure. A bank’s reputation is its own; it cannot be outsourced.

An AI ready governance framework must be vendor agnostic. It must provide an independent layer of verification that monitors every AI interaction, regardless of whether the model is provided by big tech or built in house. This oversight ensures that the bank remains resilient to "model drift" or changes in a vendor’s privacy policy. Fyscal Technologies helps banks build these independent governance layers, ensuring that the institution remains the ultimate arbiter of its clients’ trust.

The move toward an architecture of dynamic trust is not merely a defensive play; it is a catalyst for measurable business growth. Institutions that successfully navigate the new rules of trust can expect:

The horizon of 2026 demands a new kind of leadership. It requires a C Suite that understands that privacy is the fuel for AI, not the enemy of it. The institutions that will define the next decade are those that recognise trust is a technical deliverable, built on a foundation of architectural freedom and modular resilience.

Fyscal Technologies helps financial institutions bridge the gap between today’s legacy constraints and the autonomous future. By engineering the vendor agnostic systems required for the new rules of trust, we ensure that your bank is not just compliant, but competitive. The time to architect for trust is now.

Ready to explore how Fyscal Technologies can help you achieve this